I’ve had a couple of people ask about how to use the new interactive visualizations we offer at Hockey Graphs, so I thought I’d take the time to provide a tutorial with some visual demonstrations.

Corsi

Friday Quick Graphs: Are the 2014-15 Buffalo Sabres the Worst Team of All-Time?

This is part-opportunity to finally explore this question, and part-opportunity to tout some existing and upcoming data visualizations for HG. Travis Yost has been following the absolutely terrible Sabres season all year, and has raised some questions about whether it’s an all-time worst team. He’s only been able to reach back to the admittedly bad early 2000s Atlanta Thrashers, but the historically bad team by which all others need to be measured is the 1974-75 Washington Capitals squad. Using an historical metric like 2pS%, or a team’s share of all on-ice shots-for in the first 2 periods (expressed as a percentage), we can bring the 2014-15 Sabres together with the 74-75 Caps to see where both teams stand. Note: I used the cumulative version of the measure below, and added lines for one standard deviation below league-average in both seasons.

For as bad as Buffalo has been, they haven’t quite matched the futility of the 74-75 Capitals…nor should they. The Capitals were an expansion team that year, and unlike in other years the NHL did not really reach out to ensure the expansion teams in 1974-75 were given a good base to build from. These were also the peak years of the World Hockey Association, which made professional level talent even more diffuse than normal. The other expansion team in 74-75, the Kansas City Scouts, lasted two years before moving to Colorado to become the Rockies (the team subsequently moved to New Jersey in 1982-83 and changed their name to the Devils).

I included the standard deviations for the leagues in 1974-75 and 2013-14 (I haven’t compiled the data for 2014-15 yet, but this should be close enough), and even by those markers the Capitals compared markedly worse to their league than did the Sabres. But once again, the Capitals had a reasonable excuse, while the Sabres have walked into this situation with eyes wide open.

For those interested, I also put together 2-period shots-for and shot-against rates (and stretched them out to per 60 minutes) to get a rough sense of offense-versus-defense for both teams.

I added a couple extra filters to the charts, league-averages and standard deviations as well as 20-game moving averages in all the measures I used, which you can select by clicking on the grey “Team” bars and clicking on “Filter.”

The Hockey Graphs Podcast (EP 3): Hot Dogs = Sandwiches

Welcome to the third episode of the Hockey Graphs podcast, where Rhys Jessop (of Canucks Army and That’s Offside) and Garret Hohl continue talking about hockey while learning how to podcast. Join us as we lament the death of Corsi. We also talk about Mike Richards hitting the waiver wire, All-Star game, and (as always) some prospects and draft theory. Continue reading

Trading Off: How Much Possession Can My Team Surrender and Still Win?

Photo by Michael Miller, via Wikimedia Commons; altered by author

Within the continuing discussions over the value of possession metrics, and the veracity of shot quality or shooting talent measures, there’s a point that seems to have slipped through the cracks. While there’s a spectrum of attitudes about possession and shot quality/talent, neither entirely refutes the importance of the other – and with that thinking, it’s worth considering how much you can sacrifice in one and still maintain success by the other. Put more simply, how little can a team possess the puck and still expect to shoot their way to success?

Continue reading

One of the many issues with the Toronto Maple Leafs

Rand Carlyle was recently fired from the Toronto Maple Leafs. This brought joy to many online Leaf fans as many –legitimately– believed Carlyle to be a source of the Maple Leafs consistently being out shot, out possessed, and out chanced.

Of course, Carlyle was not the difference between the Leafs spontaneously becoming a contender in the east. There are issues with the Maple Leafs that will take some time for Brendan Shanahan and company to fix. Continue reading

Back to Basics: Forward Univariate Analysis

League wide univariate analysis isn’t very sexy, which is why you rarely see it used in the hockey blogosphere. Still, the information is necessary in better understanding what we are describing and adding context. It is also useful for looking back at whenever a variable may not impact or work in a model as you initially hypothesize.

League wide univariate analysis isn’t very sexy, which is why you rarely see it used in the hockey blogosphere. Still, the information is necessary in better understanding what we are describing and adding context. It is also useful for looking back at whenever a variable may not impact or work in a model as you initially hypothesize.

I gathered all player season data for each full (excluding lockout) season available in the “Behind the Net era”, filtering only forwards with 100 or more minutes. These seasons were combined into one massive sample of 2368 player seasons. Continue reading

Schedule Adjustment for Counting Stats

Edit:There is another version of this article available in pdf which includes more explicit mathematical formulas and an example worked in gruesome detail.

Rationale

We all know that some games are easier to play than others, and we all make adjustments in our head and in our arguments that make reference to these ideas. Three points out of a possible six on that Californian road-trip are good, considering how good those teams are; putting up 51% possession numbers against Buffalo or Toronto or Ottawa or Colorado just isn’t that impressive considering how those teams normally drive play, or, err, don’t.

These conversations only intensify as the playoffs roll around — really, how good are the Penguins, who put up big numbers in the “obviously” weaker East, compared to Chicago, who are routinely near the top of the “much harder” western conference? How can we compare Pacific teams, of which all save Calgary have respectable possession numbers, with Atlantic teams, who play lots of games against the two weak Ontario teams and the extremely weak Sabres? Continue reading

Adjusted Possession Measures

A little while ago I wrote an article at SensStats discussing score effects and suggesting a new formula which we might use to compute score-adjusted Fenwick. This article addresses several interesting questions and new avenues that were suggested to me by various commenters.

- The method in the above-linked article simultaneously adjusts for score and for venue (that is, home vs away). It’s interesting to estimate the relative importance of these two factors. As we’ll see, it turns out that adjusting for score effects is dramatically more important than adjusting for venue effects.

- We might consider adjusted corsi instead of adjusted fenwick; it turns out that adjusted corsi is a better predictor of future success than adjusted fenwick at all sample sizes.

- Most interestingly, we might consider how score effects vary over time, and see if we can create a score-adjusted possession measure that takes this variation into account. We find here that performing such adjustments is indistinguishable in predictivity from the naive score-adjustments already considered.

Several people have pointed out that score effects have a strong time-dependence. At least as far back as 2011, Gabriel Desjardins (@behindthenet) noted the effect and readers with keener memories than me will no doubt remember still earlier examples. Just last week, Fangda Li (@fangdali1) wrote an article arguing that score effects play virtually no role outside of the third period. This article will show that, while score effects are magnified as the game wears on, time-adjustment for possession calculations is not justified. Continue reading

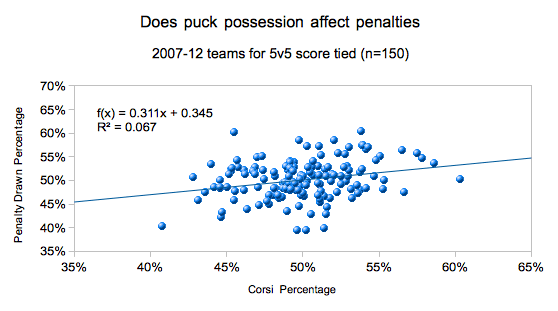

Friday Quick Graph: Does puck possession affect penalty differentials?

Using data from War-On-Ice.com, I grabbed the penalty and Corsi differentials for all teams for 5v5 score tied minutes. The whole point was to look at whether or not possession plays a role in a team’s penalty differential.

Above we see a weak but real relationship, with about 6.7% of penalty differentials being explained via possession.

From the regression curve, we estimate the average impact difference between a top and bottom possession team is about 11 penalties drawn per a season for 5v5 score-tied minutes. Of course, there is the opportunity to draw penalties for other team strengths and score situations. (The bottom/top difference is using the 40-60 rule)

Gordie Howe vs. Bobby Orr vs. Wayne Gretzky vs. Sidney Crosby: Not Your Typical WOWY

Photo by “Djcz”, via Wikimedia Commons

With or Without You analysis, often referred to as WOWY, frequently involves either comparing the performance of a team or particular players when a single player is and isn’t playing. While the approach is a risky one (sample size is a pretty big issue), it can actually be quite telling when you collect enough data.

The value of modern WOWY is that you can definitely get data from precisely the seconds a player played apart from the seconds they weren’t on the ice. Historical WOWY, on the other hand, cannot do much better than taking data from games a player played versus games they didn’t. To this end, then, I wanted to see if historical WOWY can tell us much of anything, and the best way to do that is to focus on players that are undisputed in their value. In this case, I went for WOWYs of the big guns, four of the best players across the eras of NHL history: Gordie Howe, Bobby Orr, Wayne Gretzky, Sidney Crosby.

Continue reading