In part 1, I described three “pen and paper” methods for evaluating players based on performance relative to their teammates. As I mentioned, there is some confusion around what differentiates the relative to team (Rel Team) and relative to teammate (Rel TM) methods (it also doesn’t help that we’re dealing with two metrics that have the same name save four letters). I thought it would be worthwhile to compare them in various ways. The following comparisons will help us explore how each one works, what each tells us, and how we can use them (or which we should use). Additionally, I’ll attempt to tie it all together as we look into some of the adjustments I covered at the end of part 1.

A quick note: WOWY is a unique approach, which limits it’s comparative potential in this regard. As a result, I won’t be evaluating/comparing the WOWY method further. However, we’ll dive into some WOWYs to explore the Rel TM metric a bit later.

Rel Team vs. Rel TM

Note: For the rest of the article, the “low TOI” adjustment will be included in the Rel TM calculation. Additionally, “unadjusted” and “adjusted” will indicate if the team adjustment is implemented. All data used from here on is from the past ten seasons (’07-08 through ’16-17), is even-strength, and includes only qualified skaters (minimum of 336 minutes for Forwards and 429 minutes for Defensemen per season as estimated by the top 390 F and 210 D per season over this timeframe).

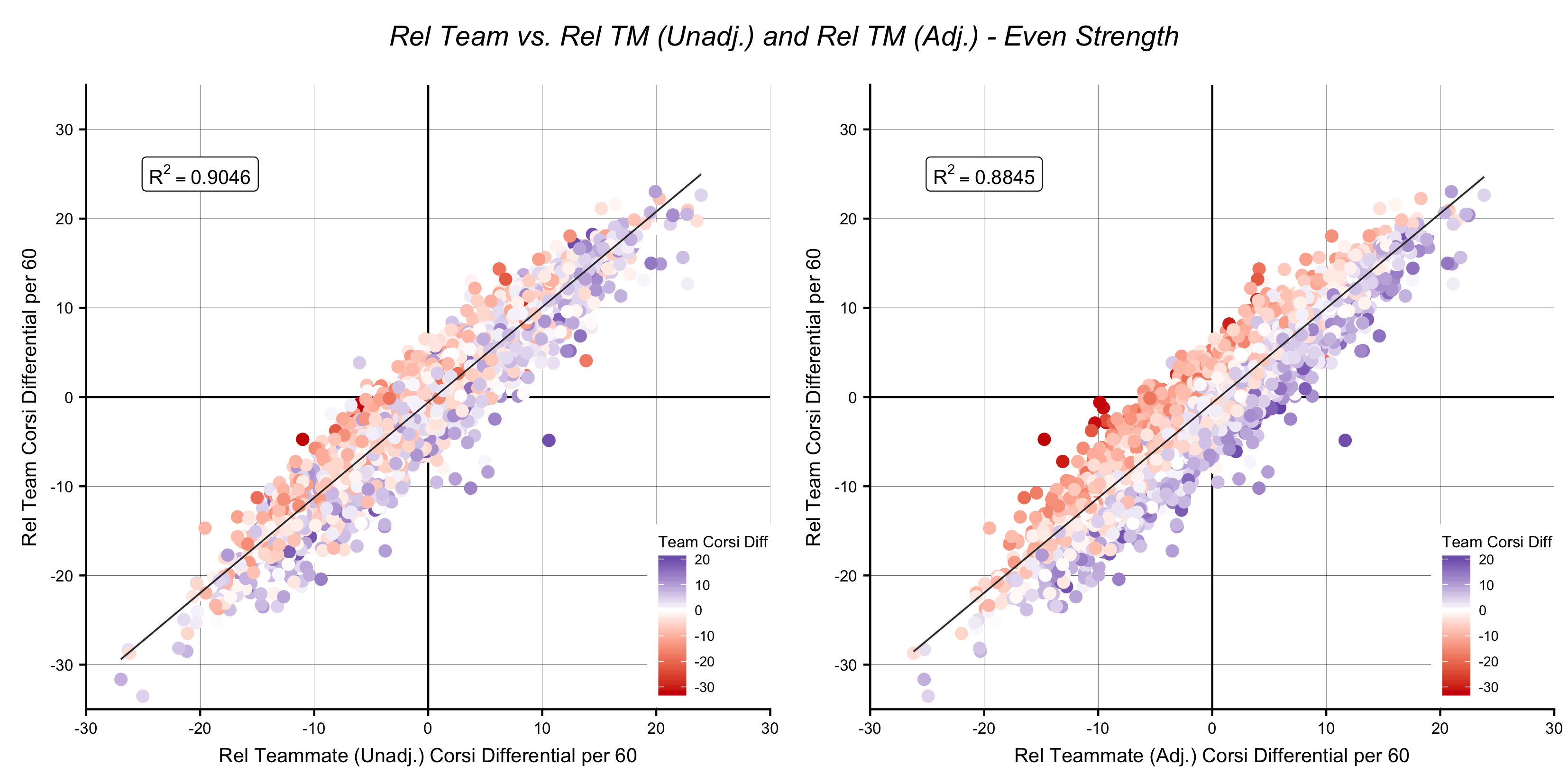

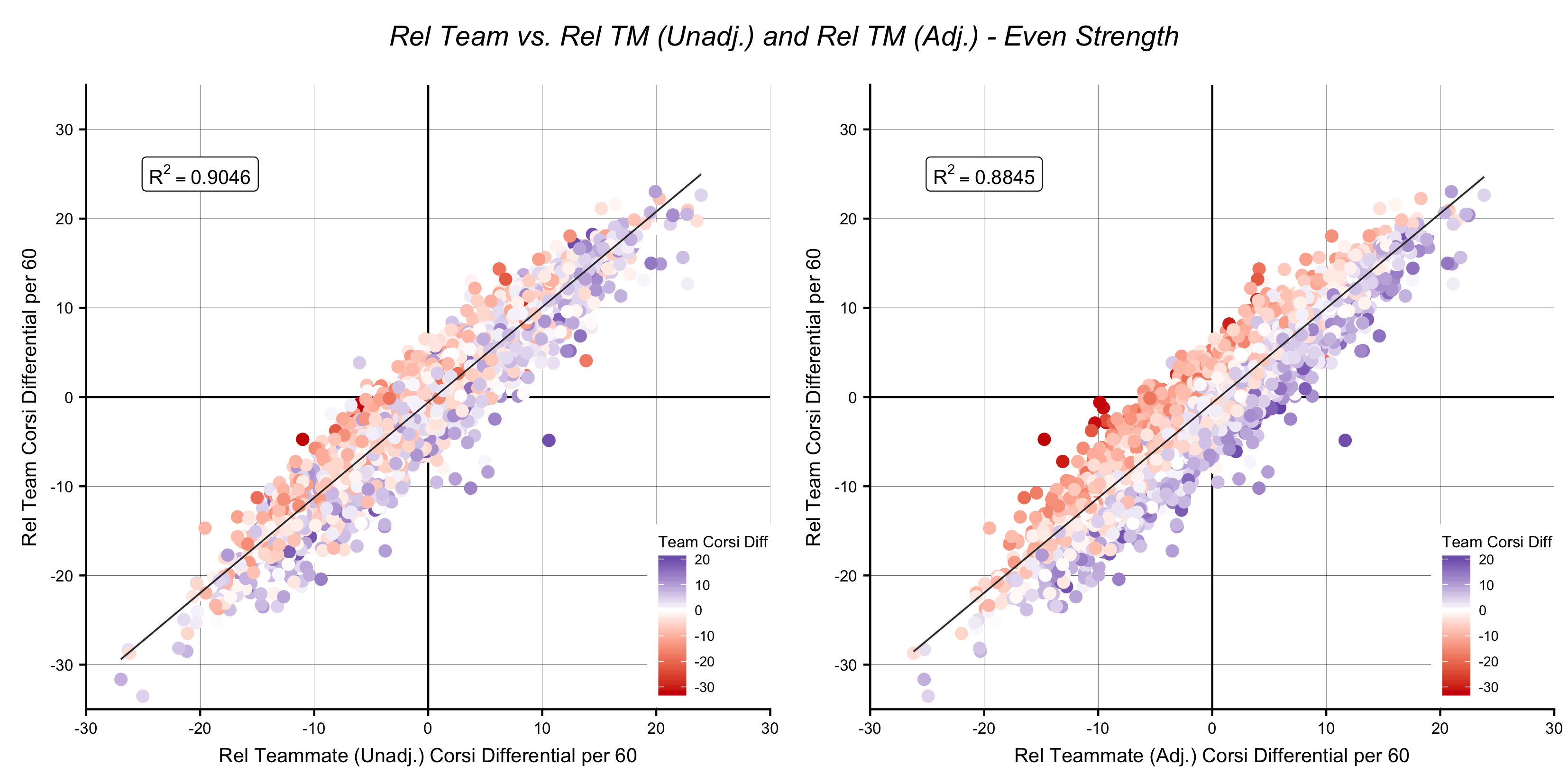

Below, I plotted Rel Team against both the adjusted and unadjusted Rel TM numbers. I have shaded the points based on each skater’s team’s EV Corsi differential in the games that skater played in:

Continue reading →

Continue reading →